Robotic Head Module for Human-Robot Non-Verbal Communication

interactive head robot

Abstract

Home-care robots exhibit distinct characteristics due to their close coexistence with human subjects, making interaction with them an unavoidable aspect of their functionality. For human-robot communication, previous studies in designing robot motions have focused on mimicking human-to-human communication forms, including facial expressions and gestures. These approaches ease the interpretation of the robot's intention by the human users without requiring an extensive learning process. Among the various communication-related modalities, head gestures play an important role, conveying not only simple cues of affirmation or negation, but also more complex and subtle cues related to emotion and attitude. These findings motivate the development of a robotic head module tailored for home environments to support social interaction through intuitive and expressive head gestures.

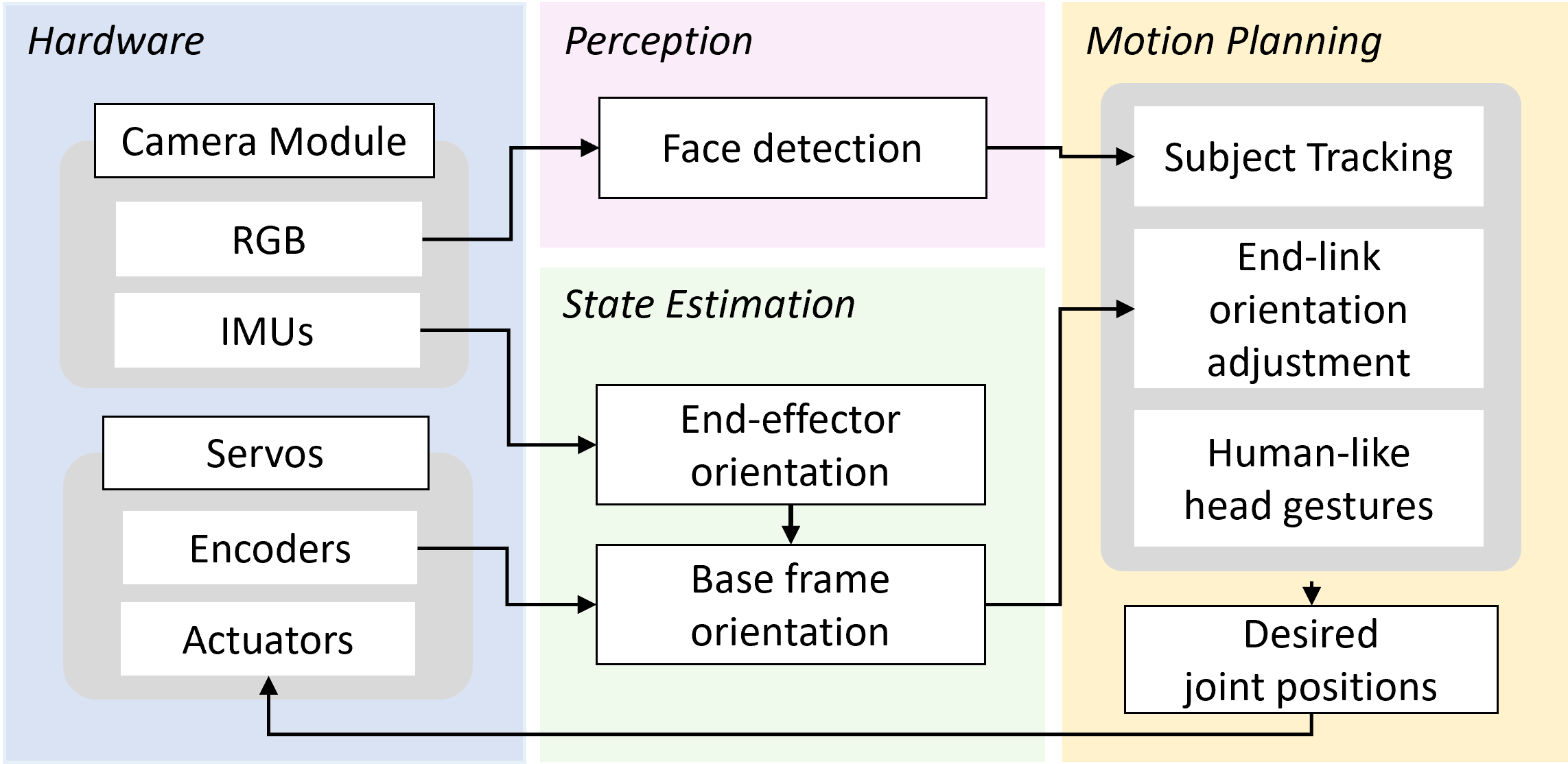

This work presents a 3-DOF robotic head module designed to support non-verbal human-robot communication in various in-home scenarios. For effective deployment, two key features are considered: adaptability to diverse home environments and the ability to deliver clear, non-verbal communicative cues. To ensure adaptability, the module is compatible with a range of docking conditions, including different mounting orientations and dynamic platform states. For interaction, it is capable of tracking a human subject and generating expressive, gesture-based cues. Given these task requirements, an integrated system is developed that enables real-time operation through tightly coupled perception, motion control, and planning modules.

Selected Publications

Motion Planning Framework

Robot motion is defined by integrating three components: end-link orientation adjustment, human-like head gestures, and subject tracking.

End-Link Orientation Adjustment

The end-link orientation is adjusted to maintain a nominal pose without head gestures, ensuring that the camera remains parallel to the ground. This allows the module to deliver non-verbal communication cues clearly across different mounting conditions and platform states.

Human-Like Head Gestures

To express non-verbal cues, three representative human-like head gestures are defined in the global frame using sinusoidal functions with distinct directions, amplitudes, and durations.

Subject Detection and Tracking

For subject tracking, a face is detected using the Haar Cascade method, and the system adjusts the end-link orientation to center the detected face in the camera frame.

BibTeX

@INPROCEEDINGS{10309462,

author={Moon, Chaerim and Yamsani, Sankalp and Kim, Joohyung},

booktitle={2023 32nd IEEE International Conference on Robot and Human Interactive Communication (RO-MAN)},

title={Development of a 3-DOF Interactive Modular Robot with Human-like Head Motions},

year={2023},

volume={},

number={},

pages={141-146},

keywords={Target tracking;3-DOF;Robot sensing systems;Manipulators;Mobile robots;Robots},

doi={10.1109/RO-MAN57019.2023.10309462}

}